Failure: playing video from audio cassette

Update 2022-07-12: I recently received an email from Pablo Vidal (@this_may_be_it), who had success getting with a similar project, using a MiniDisc player — see the end of this post for details.

The idea for this project came from a few different places:

- Curiosity about how cable modems actually transmit ones and zeros along the coax cable. This led to some reading about the various modulation methods used.

- Code I’d written to read the data on cassette tapes containing files from the Dragon 32 computer of my youth. (Including this one.) The modulation here is fairly simple, being one cycle of a certain tone for a ‘0’ and one cycle of a different tone for a ‘1’.

- Some fairly superficial reading about video codecs.

I wondered what could be achieved with cable-modem-style modulation techniques in the way of putting data onto audio cassette tapes. In particular: could you read enough bits per second from a cassette tape to get watchable video?

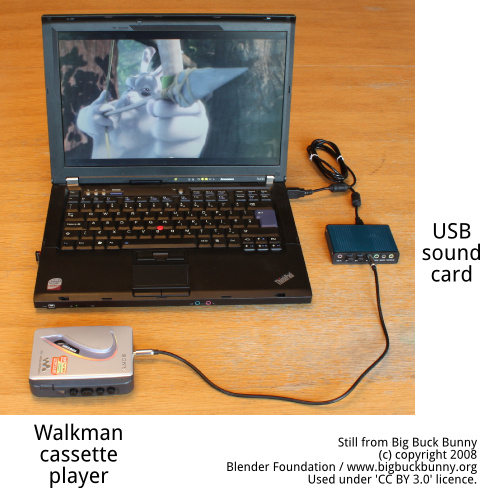

The goal, then, was to make a system which decoded video from a normal audio cassette tape, such that the combination of a Walkman and a laptop could play a movie. This non-functional mock-up gives the idea:

You would be able to stop the tape to pause, or fast-forward or rewind through the video. The external USB sound card was required to get stereo audio capture.

Alas, I did not get this to work, but did come across a few interesting things while trying.

Video encoding

After dithering backwards/forwards between Google’s VP8 and the x264 implementation of H.264, I went with x264. The main selling point was x264’s ‘hard variable bitrate limit’ mode, necessary to avoid buffer underflow. I could not find an equivalent under VP8.

The x264 encoder can produce a fairly raw data-stream, which it turns out is made up of blocks called ‘NAL units’. I did not go into the full details of the data-stream format, but after a bit of experimenting, did get a simple program using libavconv which read the file, processed the NALs, and emitted YUV pixel data to a spawned mplayer process. So far so good.

One amusing coincidence: while developing this program, I got an error message from libavconv about ‘releasing zombie picture‘, which was pretty funny given that the video source I was using for the experiments was Zombieland.

Modulation

The modulation technique used in cable modems is usually QAM modulation. Briefly, the idea is to encode two signals, one onto the amplitude of each of two orthogonal carriers, variously called ‘cos’/’sin’, ‘real’/’imaginary’, or ‘in-phase’/’quadrature’. Each of the signals carries half of the bits.

At the receiving end, the two signals are pulled apart again. Each recovered signal is sampled some number of times per second, and at the sampling instant it should have one of a fixed number of distinct values. In practice there are various sources of noise, but as long as one value doesn’t blur into the next, we’re OK. In between sampling instants, the signal can wander.

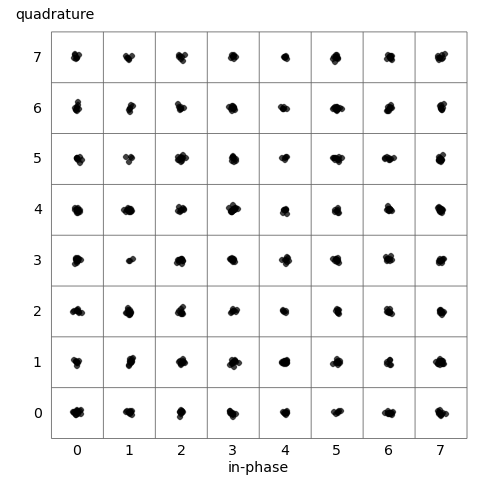

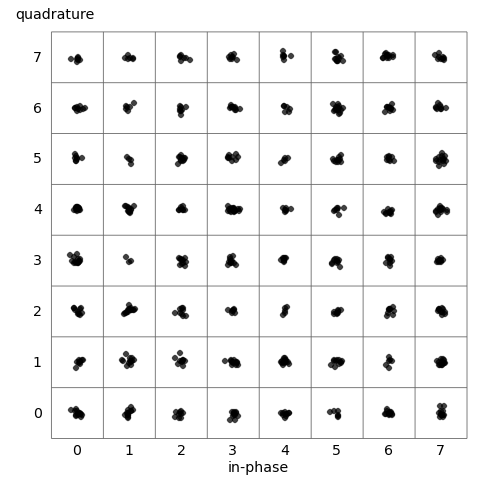

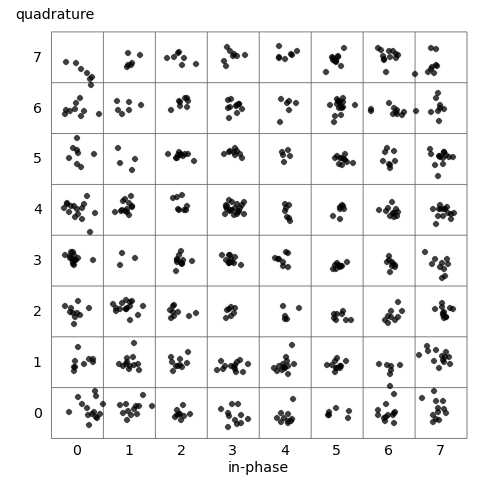

Considering the two signals as the two axes of the coordinate plane, the points observed at the sampling instants should cluster tightly around the nominal centre points of the ‘constellation’, which can be various shapes but is often a square grid. The experiments below give an example.

Results from original audio file

The initial step was to write some code to take a stream of test data, and modulate it using QAM, giving a wav file. To test the modulation and demodulation code was working properly, a sanity check is to attempt to recover the test data by reading back that same file. No analogue stage is involved at all.

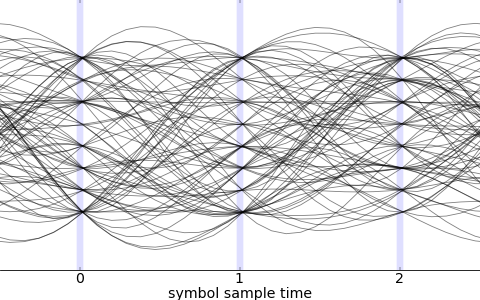

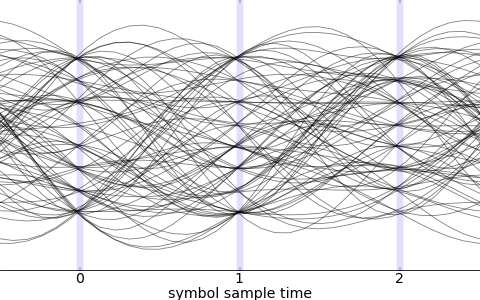

Drawing many traces of one of the recovered signals gives this picture, an ‘eye diagram’, so called because of the vertically-stacked open ‘eyes’ at the sampling instants (light blue vertical lines).

Judging by eye (ha!), it looks like it’s sampling the signal a tiny bit early. It’s clear how important good timing is from how narrow the eyes are compared to the symbol sampling interval.

Looking at the two signals as coordinates in the plane, the ‘received’ constellation was:

The samples are central within their squares, and no symbol would be mis-decoded with these results. So far so good.

Results from loopback cable

The next stage is to bring in audio playback and recording, but for now leave out the actual tape. We play the sound file, over a cable, back to a capture port on the same machine.

This is telling the story out of order, but using a pilot tone to recover timing information, I got results only slightly worse than those from using the original data. The eye diagram still showed cleanly open eyes:

Curiously, this time it looks like it’s sampling slightly late. But the received constellation is still pretty good:

Emboldened by these successes, it was time to try recording onto a real cassette tape (using a NAD 6220), and playing back (using a Walkman WM-EX194).

Results via real cassette tape

This was disastrous.

Reviewing the many experiments I did, a few things seem to have caused trouble. The non-flat frequency response of tape distorts the signals. Apparently, good-quality tape in good-quality equipment can give reasonably flat reproduction up to 15kHz or so, but my set-up could only manage around 9kHz of flat bandwidth; frequencies higher than this were strongly attenuated.

Flutter

The biggest source of error, I think, was huge and was ‘flutter’ of the tape. This is the non-constant speed of the tape moving over the head, whether in recording or playback. When the speed varies slowly, it’s called ‘wow’, and when the speed varies quickly, it’s called ‘flutter’.

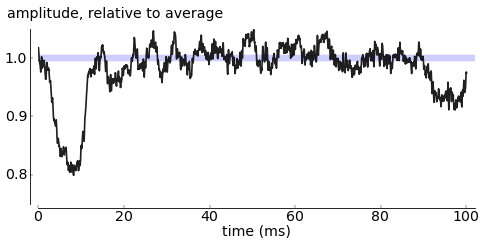

Another problem was variable playback volume, with drop-outs being an extreme example. We can look at the effects of both these in one experiment.

Behaviour of pure tone

We record a pure 4200Hz tone onto tape, then replay and capture it. By treating this as a QAM signal, the downconverted and low-pass filtered (two-dimensional) signal should be constant.

Amplitude: Looking first at the nominally constant amplitude, scaled to be relative to its average over the whole capture:

Clearly, this is highly variable. The symbol rates I was attemping were around 4kbaud, so this 100ms interval would contain around 400 symbols. The relative amplitude of one symbol compared to another can be very different. The effect on the received constellation is to expand and contract it around the origin, smearing one square’s points into nearby squares.

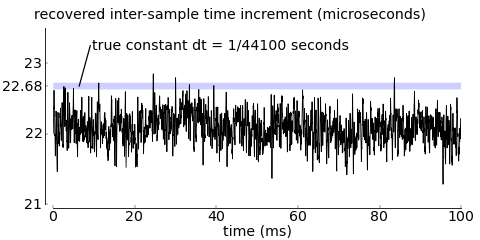

Phase: To study the supposedly constant phase, one way to look at its behaviour is to use the inter-sample change in phase to see how much time appears to have elapsed between samples. This should be constant and equal to the true inter-sample time of (1/44100)s, but it is not:

There is a definite overall negative offset, showing that the replayed audio seems to have been slowed down. This could be caused by a different sampling clock in the playback vs capture channels of the sound card, but other results suggest not. Much more likely is that the Walkman has a slower motor than the NAD deck.

Zooming in (not shown) reveals a fairly strong periodic component in the 1kHz–2kHz range, consistent with there being some underlying physical process. Apparently there is a phenomenon called ‘scrape flutter’ caused by the tape juddering over the head; I could be seeing this.

A constant offset would present no problem; the highly variable speed is a problem. Failing to recover the timing means that the received constellation points are rotated around the centre, with the effect (as with amplitude variation) of smearing points into nearby squares.

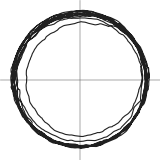

Putting these results together, we can plot the trajectory of the supposedly constant two-dimensional signal in the (I, Q) plane; this is shown to the right. If the received signal was completely undistorted, the ‘trajectory’ would consist of the recovered point not moving over time.

Pilot tone for timing recovery

Recording a constant ‘pilot’ tone on one channel (say the right) should allow recovery of true timing, undoing the effects of flutter. We should then be able to put QAM data on the other (left) channel.

Barker codes

The timing is not fully recovered using a pilot; it is still only known up to a constant offset. We can identify the offset by including gaps of silence between ‘blocks’ of data, and having each block start with a recognisable pattern. There are ‘Barker codes‘ which are optimal from the point of view of giving a strong peak in correlation, greatly reducing the chances of mis-alignment.

Best constellation achieved

With these techniques, the best results I managed used the following set-up:

Left channel: Symbol rate of 4009 symbols/second, modulation 64-QAM with carrier frequency 2480Hz, excess bandwidth 0.2. Right channel: Pure pilot tone of 3500Hz. This would give a raw bitrate of c.24kbps.

The received constellation was:

This is much noisier than earlier experiments, but not too bad. It has a symbol error rate of around 2%.

Interlude: how the grown-ups do it

Extract from “DVB-C2: Ready — Plugfest — Go”, Kabel Deutschland, February 2013

Extract from “DVB-C2: Ready — Plugfest — Go”, Kabel Deutschland, February 2013

Apparently the ‘data over cable modem’ standards bodies are exploring very high-order modulation schemes, with 65,536-QAM being mentioned. I found the example to the right, which is of a 16,384-QAM signal being received as part of an experimental transmission. The full constellation is 128×128 points; only a small snippet is shown here.

Failure to achieve higher bitrates

For video, I needed to fit more than 24kbps onto the tape. Supposing the tape speed varies at 2kHz because of flutter, we can think of the pilot as being FM-modulated, which means the pilot tone takes up maybe 4kHz of bandwidth. The fluttering pilot tone doesn’t leave much bandwidth on its channel, but I tried to fit some data into the higher-frequency part anyway. The other channel contained a handful of QAM signals at different carrier frequencies, with smaller symbol rates to try to make the channel appear roughly flat for each signal.

Unfortunately, although this worked well when re-reading the original wav file, everything turned to complete mush when going via real tape. Possible reasons: the limited dynamic range meant each individual signal appeared lower in power; flutter frequencies outside the range I’d allowed for meant interference from one signal to the next, or between pilot and signal; tape hiss at higher frequencies. I tried many variants, but didn’t get anywhere.

Error-correcting codes

I did quite a lot of reading into various error-correction techniques. Reed-Solomon codes are a cool idea, as are Low-Density Parity-Check codes. But nothing would magically recover a reliable data-stream from what I had.

Result: no workable system

The highest raw bitrate I was ever likely to get at low error rates was maybe 32kbps. I just about persuaded myself that very low-resolution video at 28kbps was watchable, and that sound at 8kbps was listenable. In total, 36kbps, and I would also need to leave a good allowance for error correction overhead. There was a big gap between what was possible and what was required. Boo.

It’s possible that by working and reading harder I could find techniques which would get me more bits per second, but the shortfall compared to the required bitrate made this seem unlikely.

Any way to salvage the idea?

There are a couple of ways I might be able to get something to work:

- Use a device with good playback stability, e.g., a small screenless portable digital audio player which can handle lossless files.

- Abandon the idea of storing arbitrary video, and instead find some special-purpose encoding for some kind of animations. (This idea from Oliver Nash.)

But these would be significantly less cool than the original idea, so I admitted defeat. It was fun trying.

Walkmans are expensive

I spent a good while poking around on eBay looking for better Walkmans than the cheap ones I’d got for this project. It turns out that you can spend hundreds of euro on a good-quality vintage Walkman. (I didn’t.) Even blank cassettes are collectors’ items, it seems.

Related work: VinylVideo

[Update, 2018-09-19:] Thanks to Malcolm Tyrrell for bringing VinylVideo to my attention. This is a system for storing video on a vinyl record. The stereo audio is fed to a decoder box which produces a video signal, with mono audio. They claim that VinylVideo® is an analog format that doesn’t use digital compression at all. As on traditional analog television, VinylVideo breaks up every picture into a picture lines. … a completely new and special analog video signal format had to be developed. The translator runs on a Raspberry Pi. I didn’t find any further information on the video encoding. The excellent Techmoan covers it here.

Related work: Pablo Vidal’s MiniDisc video player

[Update, 2022-07-12:] I recently received an email from Pablo Vidal (@this_may_be_it), who reported success with encoding video into audio, which he then recorded to and played back from MiniDisc. Pablo is at pains to give credit to the audio modem work of Roman Zeyde as the basis for the project. Pablo’s process involves: using ffmpeg to produce a low-bitrate x264 video file (64kbps video and 22kbps audio); splitting that file byte by byte into two streams (gist-1, gist-2); using a version of Zeyde’s modem software to encode those streams (gist-3); recording the resulting left and right audio channels as one stereo track onto MiniDisc (gist-4); playing the stereo audio back into two decoders and re-interleaving the two byte-streams (gist-5); and feeding the result to mplayer.

He provided a video of successful playback (which starts at about 0:50):

I think this is very nice work!

Related work: Analog video on audio cassette

Pablo also drew my attention to the work of Kris Slyka, who put a low-frame-rate analog video signal onto audio cassette.